Navigate through Containers, available on the left panel. Browsing through different directories is intuitive as you move from a year to a month to a day. You can then use the "Storage Browser" on the left panel to find the right JSON file that you want to investigate. Navigate storage account to download Airflow logsĪfter a diagnostic setting is created for archiving Airflow task logs into a storage account, you can navigate to the storage account overview page. Verify the subscription and the storage account to which you want to archive the logs. Select Diagnostic Settings from the left panel Open Microsoft Azure Data Manager for Energy' Overview page It should be a storage account, an Event Hubs namespace or an event hub.įollow the following steps to set up Diagnostic Settings: Each diagnostic setting can define one or more destinations but no more than one destination of a particular type. All Azure services share the same set of possible destinations. One or more destinations to send the logs. The set of categories will vary for each Azure service. Ensure a unique name is set for each log.Ĭategory of logs to send to each of the destinations. Each Diagnostic Setting has three basic parts: Part To access logs via any of the above two options, you need to create a Diagnostic Setting. Airflow logs can be integrated with Azure Monitor in the following ways: We collect Airflow logs for internal troubleshooting and debugging purposes. The storage account doesn’t have to be in the same subscription as your Log Analytics workspace.Įnabling diagnostic settings to collect logs in a storage accountĮvery Azure Data Manager for Energy instance comes inbuilt with an Azure Data Factory-managed Airflow instance. It will be used to store JSON dumps of Airflow logs. Useful Resource: Create a log analytics workspace in Azure portal. This workspace will be used to query the Airflow logs using the Kusto Query Language (KQL) query editor in the Log Analytics Workspace.

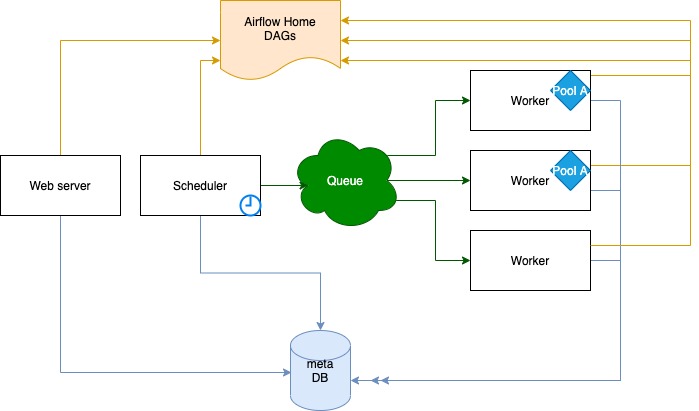

This integration feature helps you debug Airflow DAG ( Directed Acyclic Graph) run failures. Just running locally, no containers.In this article, you'll learn how to start collecting Airflow Logs for your Microsoft Azure Data Manager for Energy instances into Azure Monitor. # Repeat as necessary for other process typesĬentOS 7.4 Versions of Apache Airflow Providers Kill $(cat $AIRFLOW_HOME/airflow-scheduler.pid) Kill $(cat $AIRFLOW_HOME/airflow-webserver-monitor.pid)Īirflow scheduler -log-file=$AIRFLOW_HOME/scheduler.log -stdout=$AIRFLOW_HOME/scheduler.out -stderr=$AIRFLOW_HOME/scheduler.err # no logs created, stdout/stderr not redirectedĪirflow scheduler -log-file=$AIRFLOW_HOME/scheduler.log -stdout=$AIRFLOW_HOME/scheduler.out -stderr=$AIRFLOW_HOME/scheduler.err -daemon # seems to work as expected With a fresh install (no changes to airflow.cfg, etc):Īirflow webserver -log-file=$AIRFLOW_HOME/webserver.log -stdout=$AIRFLOW_HOME/webserver.out -stderr=$AIRFLOW_HOME/webserver.err # no logs created, stdout/stderr not redirectedĪirflow webserver -log-file=$AIRFLOW_HOME/webserver.log -stdout=$AIRFLOW_HOME/webserver.out -stderr=$AIRFLOW_HOME/webserver.err -daemon # logs created but remain empty until webserver is killed, stdout/stderr suppressed after startup If the webserver behavior cannot be changed, maybe these options should just be removed. With airflow webserver in particular, these arguments seem to be mostly useless since no (useful) logs are written to a file, regardless of whether or not -daemon is specified.

If there are certain combinations of args that are incompatible, they should be noted as such in the -help and/or emit a warning at runtime. (Though worth noting that -access-logfile and -error-logfile work as expected, with or without -daemon.) What you expected to happenĬommand line args should be respected whenever possible. However, webserver does not write any logs except for a few lines on shutdown, and since stdout/stderr are suppressed, all useful logs are lost. When -daemon is added, most process types will suppress stdout/stderr and write to the specified log files as expected. This can be a bit confusing in environments where daemonization is handled externally, e.g. No log files are created, and logs continue being printed to stdout/stderr. Some airflow processes (I've tested webserver, scheduler, triggerer, celery worker, and celery flower) ignore the -log-file, -stdout, and -stderr options when running without -daemon.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed